-

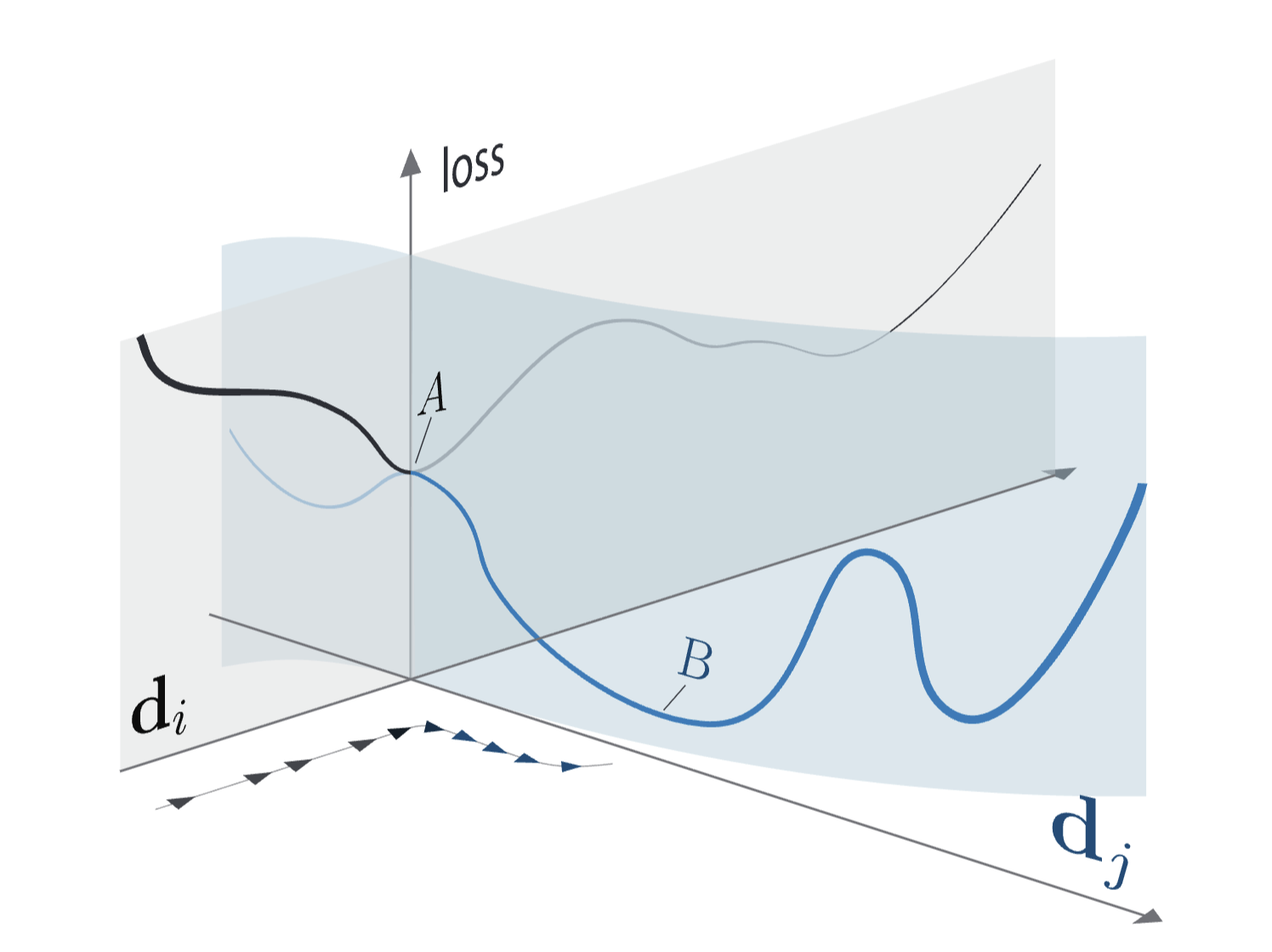

NEW PREPRINT (PDF). Using the metaphor of lottery tickets to explain the success of overparameterization is misleading, we propose a new one: escape dimensions

The lottery ticket metaphor breaks down under scrutiny. We propose Escape Dimensions Theory as an alternative.

-

Neural networks have minima at infinity. How do they look like?

We call these solutions channels to infinity, this is how standard MLPs implement Gated Linear Units (GLUs)

-

ReLU Playground: how complex are the dynamics of one neuron learning another one?

An interactive playground ⛹️♂️